What Is AI SEO?

AI SEO is the practice of optimizing your brand, content, and digital presence to appear in AI-generated search results. The five platforms that matter most in 2026: Google AI Overviews, ChatGPT, Perplexity, Gemini, and Claude.

You will also see this discipline called Generative Engine Optimization (GEO), Answer Engine Optimization (AEO), LLM SEO, or LLMO. These terms describe overlapping strategies with slightly different emphasis. AI SEO is the umbrella that contains all of them.

The core objective is simple. When someone asks an AI a question relevant to your business, your brand or content should be cited, recommended, or referenced in the response. Measuring this starts with understanding your AI Share of Voice.

AI SEO is the optimization of content, technical infrastructure, and brand signals so that AI-powered search platforms (ChatGPT, Google AI Overviews, Perplexity, Gemini, Claude) cite, reference, or recommend a brand in their generated responses. It encompasses GEO, AEO, LLM SEO, and LLMO as subdisciplines.

Traditional SEO earns clicks through blue links. AI SEO earns citations, recommendations, and mentions inside AI-generated answers. Both matter. Neither replaces the other.

Why Does AI SEO Matter in 2026?

AI SEO matters because billions of people now use AI platforms as their primary search interface, and the brands that optimize for these systems capture citations that traditional SEO alone cannot reach. The numbers tell the story. ChatGPT now has 810 million daily users (Superlines, 2026). AI referral traffic hit 1.1 billion visits in June 2025, growing 357% year over year (Similarweb), and AI platforms now account for 12-18% of total referral traffic, up from 5-8% in late 2024 (upGrowth, 2026).

Google AI Overviews now reach over 2 billion monthly users across 200+ countries (Google Q2 2025 earnings, July 23, 2025). AI Mode has crossed 100 million monthly active users in the U.S. and India combined (Google Q2 2025 earnings).

ChatGPT serves 810 million daily active users (Superlines, 2026). The Gemini app has surpassed 750 million monthly active users (Google Q4 2025 earnings, February 4, 2026). Perplexity reached 170 million monthly visits (Similarweb, 2026).

These are not niche platforms. They are primary search interfaces for billions of people.

Here is the critical problem for businesses relying solely on traditional SEO. An Ahrefs study from August 2025 found that URLs cited by AI systems overlap with Google's top 10 organic results only 12% of the time. That means 88% of AI citations go to pages that are not necessarily ranking at the top of traditional search.

The old playbook of "rank on page one and win" is incomplete. Businesses that only optimize for Google's ten blue links miss the majority of AI citation opportunities. The playbook now requires a second layer of optimization specifically designed for how AI models retrieve, evaluate, and cite sources.

Early movers have an asymmetric advantage. In practice, brands with consistent presence across training data, citations, and real-time retrieval tend to maintain their citation positions over time.

The sooner your content and brand signals are optimized for AI, the harder it becomes for competitors to displace you. For more on measuring this, see the full breakdown of what is AI visibility.

How Does AI SEO Differ from Traditional SEO?

AI SEO and traditional SEO share DNA but differ in mechanics, signals, and outcomes.

| Factor | Traditional SEO | AI SEO |

|---|---|---|

| Goal | Rank in organic blue links | Get cited, recommended, or mentioned in AI responses |

| Primary signal | Backlinks, on-page relevance, technical health | Entity authority, content extractability, cross-platform brand consensus |

| Content format | Keyword-optimized pages | Structured, evidence-rich passages with direct answers |

| Success metric | Ranking position, CTR, organic traffic | AI Share of Voice, citation frequency, brand mention rate |

| User interaction | Click to website, then read | Answer delivered in AI interface, optional click-through |

| Update cycle | Algorithm updates (months apart) | Model updates, retrieval changes (continuous) |

| Competition unit | Page vs page | Passage vs passage |

| Schema importance | Helpful for rich snippets | Critical for entity understanding and Knowledge Graph inclusion |

AI SEO does not replace traditional SEO. It builds on top of it. Strong organic rankings, clean site architecture, valid schema markup, and authoritative backlinks all feed into AI systems.

Google's own documentation states that AI Overviews have no extra technical requirements beyond standard Search eligibility (Google Search Central).

The difference is in what you do beyond the basics. Traditional SEO gets you indexed. AI SEO gets you cited.

The best strategies do both. For a deeper look at how to appear in AI search, start with your traditional SEO foundation, then layer on the AI-specific tactics outlined in this guide. For a detailed comparison of the two approaches, see AI search vs traditional SEO.

How Do AI Search Engines Work?

Each AI search platform retrieves and cites information differently. Understanding these differences is essential for targeting your optimization efforts.

ChatGPT operates in two modes. The base model draws from training data (a static snapshot). When search is enabled, it queries the web in real time via Bing and its own browsing infrastructure.

ChatGPT has 810 million daily active users (Superlines, 2026). It accounts for 87% of all AI referral traffic to websites (Semrush, 2026) and referrals convert at 5-16%.

Optimization for ChatGPT requires both strong web presence (for real-time retrieval) and broad brand footprint (for training data inclusion).

Claude (by Anthropic) operates primarily from training data but increasingly supports web search in its responses. When search is enabled, Claude retrieves and cites web sources in real time.

For training-data-based responses, Claude draws from its knowledge base. Optimization for Claude follows the same LLM SEO principles as other training-data-dependent models: broad, consistent brand presence across authoritative sources.

Perplexity is built as an answer engine from the ground up. It searches multiple indexes, reads source pages, and constructs answers with numbered citations. It reached 170 million monthly visits (Similarweb, 2026).

Perplexity prioritizes pages that give direct, well-structured answers. It is particularly sensitive to content freshness and source credibility. Referrals convert at 8-11%.

Gemini uses Google Search index and Knowledge Graph for retrieval, presenting inline source cards. With 750M+ monthly active users on the app alone, it is a major AI search platform. It shares retrieval mechanics with AI Overviews but operates as a standalone conversational interface.

Google AI Overviews use a process Google calls "query fan-out." Rather than answering from a single search, the system runs multiple related searches, gathers information from diverse sources, and assembles a synthesized response (Google Search Central).

AI Overviews reach over 2 billion monthly users across 200+ countries (Google Q2 2025 earnings). Read more about what triggers an AI Overview.

| Platform | Retrieval source | Citation format | Freshness sensitivity | Key signals | Monthly users |

|---|---|---|---|---|---|

| ChatGPT | Bing index + web browsing | Numbered footnote citations | Moderate to high (browsing-enabled) | Content clarity, structured data, Bing ranking | 810M daily (2026) |

| Claude | Training data + web search (when enabled) | Inline references when searching | Low (training data) to high (search mode) | Training data presence, content quality, entity coverage | 157M monthly visits |

| Perplexity | Multi-engine (Google, Bing, own index) | Numbered inline citations | High (real-time search) | Source authority, direct answers, freshness | 170M monthly visits |

| Gemini | Google Search index + Knowledge Graph | Inline source cards | High (real-time) | Google ranking, entity data, structured content | 750M+ (app) |

| Google AI Overviews | Google Search index (live) | Inline links to source pages | High (real-time index) | Search ranking, content structure, E-E-A-T | 2B+ |

Key pattern across all platforms: Every AI search engine rewards the same core content qualities: factual specificity, clear structure, authoritative sourcing, and passage-level extractability. The differences between platforms are in retrieval mechanics, not in what constitutes good content.

If you build content that is citation-worthy for one AI platform, it performs well across all of them.

How Do AI Citations Actually Happen?

AI citations happen through a five-stage pipeline: retrieval, passage selection, answer synthesis, citation inclusion, and recommendation. Understanding each stage reveals where optimization effort should focus.

Stage 1: Retrieval. The AI system identifies candidate pages. For real-time systems (AI Overviews, Perplexity, ChatGPT with search), this means searching live indexes. For training-data-based responses, the model draws from patterns learned during training.

Google's query fan-out process means multiple sub-queries run simultaneously, pulling in candidate pages from different angles.

Stage 2: Passage selection. The system does not evaluate entire pages. It identifies specific passages that answer the user's question.

A 3,000-word article may contribute a single 2-3 sentence passage to an AI response. This is why content structure matters more than word count.

Stage 3: Answer synthesis. The AI combines information from multiple passages across multiple sources into a coherent answer. It may paraphrase, summarize, or quote directly. The passages that survive this stage are the ones that state information clearly, with specificity and supporting evidence.

Stage 4: Citation inclusion. Not every source that contributed to an answer gets cited. AI systems assign citations based on which sources most directly support specific claims. Sources with statistics, named studies, direct quotes, and specific data points earn citations at higher rates.

AI models do not cite pages. They cite passages. A single well-structured paragraph with a clear claim, supporting data, and a cited source will outperform a thousand words of general commentary.

The Princeton/Georgia Tech GEO paper (presented at KDD 2024) tested specific content modifications and their effect on AI visibility. Content enriched with citations, quotations, and statistics improved visibility by 30% or more in certain settings.

This is not theory. It is measured and published research.

The implication is practical. Every important page on your site needs at least one passage that states a clear fact, supports it with evidence, and is formatted for easy extraction. Learn more about how to get cited by AI.

Citation vs Recommendation vs Mention

These are different outcomes with different values.

| Outcome | What it looks like | Value | How to earn it |

|---|---|---|---|

| Citation | AI links to your page as a source | Highest: drives direct traffic | Specific claims with evidence, structured content |

| Recommendation | AI names your brand as a solution | High: drives branded searches | Strong entity presence, review signals, community consensus |

| Mention | AI references your brand in context | Moderate: builds familiarity | Broad web presence, consistent brand messaging |

AI SEO strategies target all three. The tactics overlap significantly, but the content and entity optimization required for citations is more demanding than for mentions.

Aim for citations. Recommendations and mentions follow naturally.

What Is the Difference Between GEO, AEO, and LLM SEO?

GEO, AEO, and LLM SEO are three overlapping subdisciplines of AI SEO, each targeting a different layer of how AI systems find and cite content. Here is what each means and how they relate.

Generative Engine Optimization (GEO)

GEO is the practice of optimizing content to appear in AI-generated responses. The term comes from the Princeton/Georgia Tech research paper (KDD 2024), which found that evidence-rich content modifications — citations, statistics, expert quotes — improved visibility by 30% or more. GEO focuses on how content is written: evidence density, structural clarity, and passage-level extractability.

For implementation tactics, see Phase 5: Content Creation and Optimization. Need done-for-you GEO? See GEO services.

Answer Engine Optimization (AEO)

AEO is the practice of structuring content to be selected as the direct answer to user queries. It evolved from featured snippet optimization (2014) and now encompasses all AI answer formats including AI Overviews. Where GEO emphasizes evidence density, AEO emphasizes query-intent alignment — starting with the question and working backward to a clean, extractable answer.

For implementation tactics, see Phase 5: Content Creation and Optimization. For implementation support, explore AEO services.

LLM SEO (LLMO)

LLM SEO focuses on how your brand appears in AI model responses through their training data and parametric memory. ChatGPT, Claude, and Gemini all have training data cutoff dates, and the information present at training time shapes their baseline "knowledge" of your brand. LLM SEO ensures that knowledge is accurate, positive, and consistent across authoritative sources (Wikipedia, Crunchbase, industry publications).

For training data tactics, see Phase 6: Authority and Topical Depth. For dedicated strategies, explore LLM SEO services. For off-site tactics, see Phase 7: Off-Site Citations and Community.

GEO vs AEO vs LLM SEO Comparison

| Term | Focus | Primary mechanism | Key difference |

|---|---|---|---|

| GEO | Content optimization for AI-generated responses | Evidence density, structural clarity, passage extractability | Emphasizes how content is written |

| AEO | Query-answer matching for direct answers | Featured snippet optimization, FAQ structure, answer-first format | Emphasizes query-intent alignment |

| LLM SEO (LLMO) | Brand representation in AI training data | Cross-platform brand consistency, authoritative source presence | Emphasizes training data influence |

| AI SEO | Umbrella discipline encompassing all above | All mechanisms combined | The complete framework |

In practice, most effective AI SEO strategies use all three simultaneously. If you execute the framework in this guide, you are doing all three.

- AI SEO - Artificial Intelligence Search Engine Optimization

- GEO - Generative Engine Optimization

- AEO - Answer Engine Optimization

- LLM SEO - Large Language Model Search Engine Optimization

- LLMO - Large Language Model Optimization

- RAG - Retrieval-Augmented Generation. The technique where AI models search external sources in real time to supplement their training data before generating a response.

- E-E-A-T - Experience, Expertise, Authoritativeness, Trustworthiness

- AIO - AI Overview (Google)

- SERP - Search Engine Results Page

- UGC - User Generated Content

- NER - Named Entity Recognition

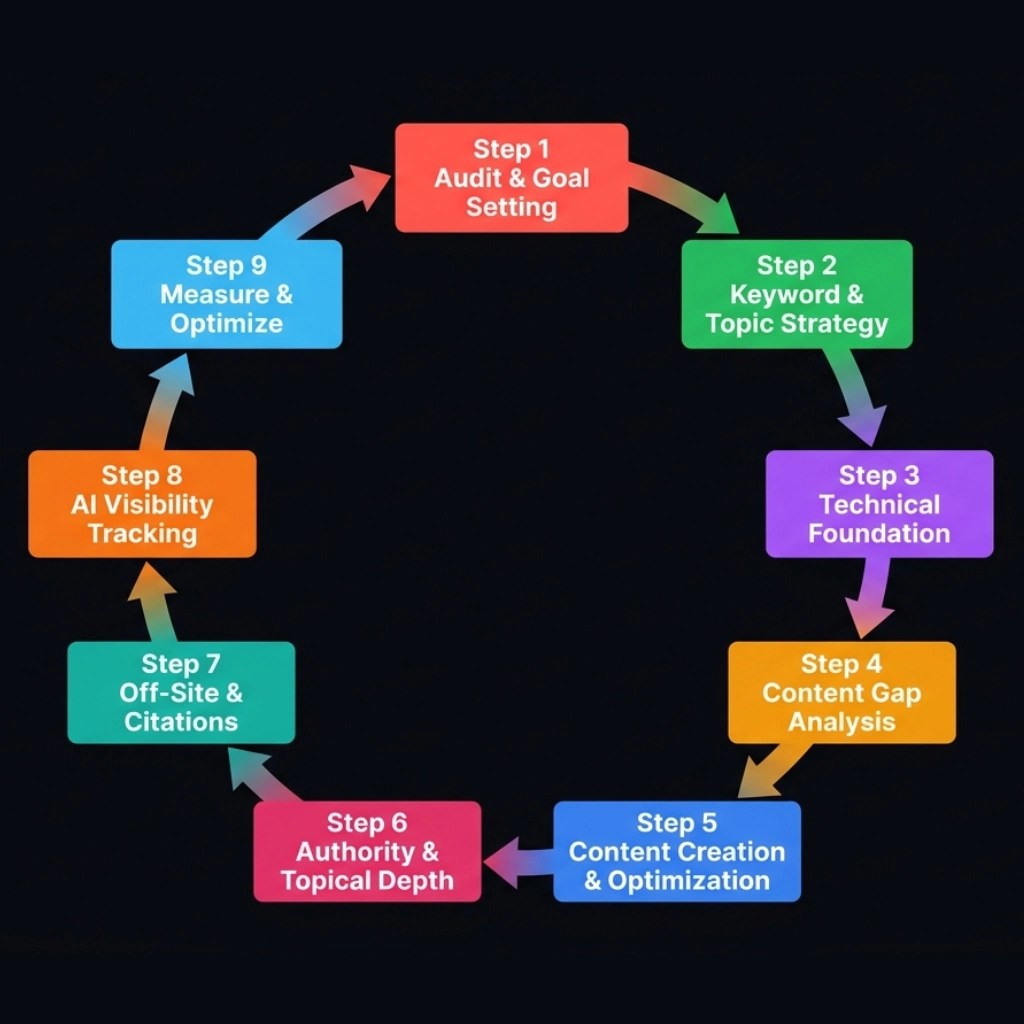

What Is the 9-Phase AI SEO Framework?

The 9-phase AI SEO framework is a continuous cycle that covers every layer of AI search optimization, from auditing your current visibility to measuring results and feeding insights back into the next iteration. Each phase feeds the next. Phase 9 feeds back into Phase 1.

How Do You Audit Your AI Visibility and Set Goals?

You audit AI visibility by systematically querying each major AI platform about your brand, benchmarking against competitors, and setting measurable citation goals. Everything starts with knowing where you stand.

AI visibility measures how often and how prominently your brand appears in AI-generated answers. You cannot optimize what you cannot measure. AI visibility measurement is still maturing, but practical frameworks exist today. For a full explanation, read what is AI visibility.

AI Visibility Audit Method

The 8 Manual Audit Query Categories

Before investing in tools, conduct a manual audit. Use these eight prompt categories across ChatGPT, Perplexity, and Gemini:

- Category query: "What are the best [your service/product category] companies?"

- Comparison query: "Compare [your brand] vs [competitor]."

- Recommendation query: "I need [specific use case]. What should I use?"

- Problem query: "How do I solve [problem your product addresses]?"

- Brand query: "What do you know about [your brand]?"

- Local/regional query: "Who are the top [your industry] companies in [city/region]?"

- Evaluation query: "What should I look for when choosing a [your product category]?"

- Reputation query: "Is [your brand] good? What do customers say?"

Record whether your brand appears, in what position, with what sentiment, and whether a citation links to your site. Repeat monthly.

AI Visibility Tools

| Tool | Focus | Key feature |

|---|---|---|

| Semrush | AI Overview tracking | Tracks AI Overview appearance for keyword sets (analyzed 200,000+ AI Overviews in their 2024-2025 study) |

| Ahrefs | AI citation monitoring | Tracks which URLs get cited in AI responses |

| Otterly | AI search monitoring | Dedicated AI visibility tracking across platforms |

| Peec AI | Brand monitoring in AI | Tracks brand mentions across AI search engines |

| Profound | AI response analysis | Analyzes AI-generated responses at scale |

| Ziptie | AI citation tracking | Maps citation sources and patterns |

AI Share of Voice Baseline

AI Share of Voice measures how often your brand is cited or recommended in AI responses relative to competitors. The formula:

AI Share of Voice = (Your brand mentions in AI responses / Total brand mentions across all competitors) x 100

Calculate this across a defined set of queries relevant to your business. Track monthly. The trend matters more than the absolute number.

Setting AI SEO Goals

Once you have your baseline audit data, you need goals. But AI SEO goals are fundamentally different from traditional SEO goals, and applying the wrong framework will set you up for frustration and misaligned expectations.

Why AI SEO Goals Differ from Traditional SEO Goals

Traditional SEO goals are position-based or traffic-based. Rank #1 for a target keyword. Grow organic traffic by 50% year-over-year. These goals work because traditional search has standardized, measurable ranking positions and clear click attribution.

AI SEO operates in a fundamentally different environment. There are no standardized ranking positions. When ChatGPT mentions three brands in a response, there is no "position 1" in the traditional sense. The order can vary between identical queries run minutes apart. The concept of a fixed ranking does not apply.

AI SEO is also multi-platform by nature. Your brand needs visibility across ChatGPT, Perplexity, Gemini, Claude, Copilot, and AI Overviews — at minimum five distinct platforms, each with different retrieval mechanisms and source preferences. A brand can dominate on Perplexity and be invisible on ChatGPT. Only 11% of domains get cited by both ChatGPT and Perplexity. These are separate ecosystems that require platform-specific goals.

Finally, attribution is indirect. When someone asks ChatGPT for a recommendation, visits your site, and converts three days later, that journey is difficult to track. AI search rarely shows up as a clean referral source in analytics. This means your goals must account for proxy metrics and leading indicators, not just direct conversions.

The bottom line: a 10-point AI Share of Voice gain in 6 months is ambitious and meaningful. Do not apply traditional SEO benchmarks to AI SEO. A 50% traffic increase goal makes no sense when AI platforms do not send measurable traffic in the same way Google does.

The AI SEO Goal-Setting Template

Use this template to set structured, measurable AI SEO goals. Fill in your baseline numbers from the audit and set realistic targets for each time horizon.

| Goal Category | Current Baseline | 90-Day Target | 6-Month Target | 12-Month Target | How to Measure |

|---|---|---|---|---|---|

| AI Share of Voice | ___% | ___% | ___% | ___% | Monthly query audit across 3+ platforms |

| Citation frequency | ___/__ queries | ___/__ | ___/__ | ___/__ | Count citations per tracked query set |

| Platform coverage | ___/5 platforms | ___/5 | ___/5 | ___/5 | Test core queries on each platform |

| Branded search lift | ___ impressions/mo | +___% | +___% | +___% | Search Console branded queries |

| Content extractability | ___% of top 20 pages | ___% | ___% | 80%+ | Page-by-page structure audit |

The key is specificity. "Improve AI visibility" is not a goal. "Increase AI Share of Voice from 12% to 22% within 6 months by optimizing our top 20 pages and building citations on 3 industry platforms" is a goal.

Aligning Goals with Business Objectives

AI SEO goals must connect to business outcomes. The right primary goal depends on your business model.

| Business Type | Primary AI SEO Goal | Secondary Goal | KPI to Report |

|---|---|---|---|

| Brand awareness (B2C) | Maximize mention frequency | Positive sentiment | "Brand mentioned in X% of AI responses for our category" |

| Lead generation (B2B) | Citation + click-through | AI-attributed leads | "AI search drove X qualified leads this quarter" |

| E-commerce | Product recommendation frequency | AI referral revenue | "AI platforms recommended products X times; revenue = $Y" |

| Local services | Appear in local AI recommendations | AI-driven calls/forms | "Recommended for X of Y local queries" |

When reporting to stakeholders, translate AI SEO metrics into language they understand. Executives do not care about AI Share of Voice. They care about brand reach, pipeline, and revenue. Frame every AI SEO goal in terms of the business outcome it serves.

Competitive Benchmarking

Before setting targets, benchmark against competitors. Run the same audit queries for your top 3 competitors. Calculate their AI Share of Voice using the same formula and query set you used for your own brand.

Your goals should be informed by competitive position, not arbitrary targets. If your top competitor has a 35% AI Share of Voice and you are at 8%, setting a goal of 50% in 6 months is unrealistic. Setting a goal of 15-20% is ambitious but achievable — and closing that gap by 10+ points represents a significant competitive shift.

| Brand | AI SoV (ChatGPT) | AI SoV (Perplexity) | AI SoV (Gemini) | Avg AI SoV | Citation with Link? |

|---|---|---|---|---|---|

| Your brand | ___% | ___% | ___% | ___% | Yes / No |

| Competitor 1 | ___% | ___% | ___% | ___% | Yes / No |

| Competitor 2 | ___% | ___% | ___% | ___% | Yes / No |

| Competitor 3 | ___% | ___% | ___% | ___% | Yes / No |

Remember: only 11% of domains get cited by both ChatGPT and Perplexity. These platforms are separate ecosystems with different source preferences. Set platform-specific goals, not just an aggregate number. A brand that is strong on Perplexity but invisible on ChatGPT has a very different optimization roadmap than one with even coverage across both.

How Do You Build an AI Keyword and Topic Strategy?

You build an AI keyword strategy by identifying the questions people ask AI systems and the sub-queries those systems generate behind the scenes. Traditional keyword research asks: "What are people typing into Google?" AI keyword strategy asks a different question: "What are people asking AI systems, and what sub-queries does the AI generate behind the scenes to answer them?"

The difference is not subtle. It changes how you research, what you prioritize, and what content you create.

How AI Queries Differ from Traditional Keywords

When a user searches "best CRM for startups" in Google's AI Mode, the system doesn't run a single search. It decomposes that query into 10-16 sub-queries: pricing comparisons, feature breakdowns, integration capabilities, user reviews, startup-specific requirements, and more. One keyword triggers potentially 15,600 retrieval events across the system (Source: iPullRank, Ekamoira). Your content doesn't need to rank for the original query. It needs to answer the sub-queries the AI generates.

Roughly 88% of these AI retrieval events are "dark queries" with zero traditional search volume. They exist only inside the AI's decomposition pipeline. No keyword tool will show them to you.

| Dimension | Traditional Keywords | AI Queries |

|---|---|---|

| Average length | 3-4 words | 23 words (Source: xFunnel) |

| Specificity | Broad category terms | Highly specific, contextual, multi-part |

| Intent signal | Fits neat categories (informational, navigational, transactional) | 70%+ don't fit traditional intent categories |

| Expected format | List of links to explore | Direct synthesized answer with sources |

| Research tool | Ahrefs, SEMrush, Google Keyword Planner | Manual AI testing, PAA mining, Reddit analysis |

Three Categories of AI-Triggering Queries

Not every query triggers an AI-generated response. An Ahrefs study of 146 million SERPs found that question-format queries trigger AI Overviews 58% of the time. Single-word queries trigger them only 9.5% of the time. Queries with 7+ words hit 46.4%.

Google uses eight internal query classifiers, and the ones most relevant to AI SEO are: Reason queries (59.8% AI Overview trigger rate), Boolean queries (57.4%), Definition queries (47.3%), and Instruction queries (35.1%). Understanding which query types trigger AI responses lets you create content that matches.

Three categories cover the majority of AI-triggering queries:

Synthesis Queries

These require combining information from multiple sources into a single answer. Examples: "pros and cons of HubSpot vs Salesforce for a 10-person team," "how does content marketing ROI compare to paid advertising in B2B SaaS."

Synthesis queries have the highest AI trigger rate because no single source can fully answer them. The AI must pull from several pages, compare data, and construct a unified response. If your content provides the structured comparison data the AI needs, you earn the citation.

Recommendation Queries

These ask for a specific solution to a defined problem. Examples: "best project management tool for remote marketing teams," "what CRM should a bootstrapped startup use."

Recommendation queries trigger curated lists in AI responses. The AI evaluates multiple sources to build its recommendation. Content that includes clear selection criteria, specific use-case matching, and transparent evaluation methodology gets cited over generic "top 10" listicles.

Explanation Queries

These ask how something works or how concepts differ. Examples: "how does retrieval-augmented generation work," "what's the difference between first-party and third-party cookies after Chrome's changes."

Explanation queries favor content with clear definitions, step-by-step breakdowns, and concrete examples. Answer-first structure matters here: lead with the definition, then expand with depth.

How to Discover AI-Triggering Queries

Traditional keyword tools are blind to 88% of AI retrieval events. Use these four methods to find queries that actually trigger AI responses in your niche.

Method 1: Reverse-Engineer AI Responses

Run 20-30 queries relevant to your business across ChatGPT, Perplexity, and Google AI Overviews. Record which queries trigger detailed AI answers, which sources get cited, and what format the AI uses to respond. This gives you a ground-truth dataset no tool can replicate.

Focus on conversational, multi-part questions. "Best CRM" tells you little. "What CRM should a 15-person B2B SaaS startup use if they need HubSpot-level marketing automation but can't afford enterprise pricing" tells you exactly what content to create.

Method 2: Mine People Also Ask

PAA boxes reveal the conversational patterns Google associates with a topic. Expand every PAA result for your target queries and record the questions. These are the question formats real users ask, and they closely mirror the phrasing users bring to AI platforms.

Method 3: Analyze Perplexity Related Questions

After Perplexity answers a query, it surfaces related follow-up questions. These are generated by the AI based on what users commonly ask next. Run your core queries through Perplexity and collect every related question it suggests. This gives you a query map that follows actual user intent paths.

Method 4: Monitor Reddit and Community Platforms

The exact questions users post on Reddit, Stack Exchange, and industry forums are often the same questions they ask AI platforms verbatim. Search Reddit for your topic and record the full question text. Pay attention to questions with high engagement: these represent real information needs that AI systems are trained to answer.

The AI Query-Content Matrix

Map each query type to the content format most likely to earn a citation:

| Query Type | Example | Optimal Content Format | Schema Type |

|---|---|---|---|

| Synthesis | "Pros and cons of X vs Y" | Comparison article with tables | FAQ + Article |

| Recommendation | "Best X for [use case]" | Curated list with criteria | Product + ItemList |

| Explanation | "How does X work?" | Pillar guide, answer-first | HowTo + FAQ |

| Evaluation | "Is X worth it?" | Decision guide with scoring matrix | FAQ + Article |

Prioritizing Topics by AI Citation Opportunity

Not every AI-triggering query is worth creating content for. Score each topic across three dimensions to prioritize your content calendar:

| Dimension | Score 1 (Low) | Score 3 (Medium) | Score 5 (High) |

|---|---|---|---|

| AI Response Frequency | AI rarely generates a detailed answer | AI answers on some platforms | AI generates detailed answers on all major platforms |

| Citation Gap | Strong, authoritative sources already dominate | Mixed quality sources cited | AI cites weak, outdated, or generic sources |

| Business Relevance | Tangentially related to your offering | Related to your category | Directly describes your product's use case |

Multiply the three scores. Topics scoring 75+ (out of 125) are your highest-priority targets. Topics scoring below 27 are not worth the investment.

Run this scoring exercise for every topic on your list. Update it quarterly as AI responses evolve and new competitors enter the space.

Traditional keyword tools show search volume for typed queries. They do not show the conversational, multi-part queries people ask AI platforms. The gap between these two datasets is where AI SEO opportunity lives.

What Technical Foundation Does AI SEO Require?

AI SEO requires schema markup, crawler access, entity signals, and consistent brand representation as its technical foundation. If AI models cannot read and identify your site, nothing else in this framework matters.

Schema Markup for AI

Organization schema, LocalBusiness schema, FAQ schema, Article schema, and Product schema all help AI systems identify entities and relationships on your pages. Google's structured data documentation explicitly states that structured data helps Google understand the content of a page. For AI systems that draw from Google's index, this understanding directly affects citation probability.

AI Crawlers and Access Control

AI platforms use specific bots to crawl web content. Each bot has a different purpose, and your control over them varies. For a full walkthrough of getting your content into AI results, see how to appear in AI search.

AI Crawler Reference Table

| Bot | Company | Purpose | Robots.txt control | JS rendering |

|---|---|---|---|---|

| Googlebot | Search indexing (including AI Overviews) | Yes | Yes (evergreen Chromium) | |

| Google-Extended | Gemini/Vertex AI training data | Yes | N/A (token only, not a separate crawler) | |

| GPTBot | OpenAI | Model training data | Yes | Not publicly documented |

| OAI-SearchBot | OpenAI | ChatGPT search retrieval | Yes | Not publicly documented |

| ChatGPT-User | OpenAI | ChatGPT browsing (user-initiated) | Yes | Not publicly documented |

| ClaudeBot | Anthropic | Model training data | Yes | Not publicly documented |

| Claude-User | Anthropic | User-initiated search | Yes | Not publicly documented |

| Claude-SearchBot | Anthropic | Claude search retrieval | Yes | Not publicly documented |

| PerplexityBot | Perplexity | Search index crawling | Yes | Not publicly documented |

| Perplexity-User | Perplexity | User-initiated searches | Generally ignores (user-initiated) | Not publicly documented |

| Applebot | Apple | Siri, Spotlight, Safari search | Yes (also follows Googlebot rules if Applebot not specified) | May render pages in a browser-like environment |

| Applebot-Extended | Apple | Apple foundation model training | Yes | N/A (token only, not a separate crawler) |

| Meta-WebIndexer | Meta | Improve Meta AI search quality and support citations | Yes | Not publicly documented |

| Meta-ExternalAgent | Meta | Foundation model training / direct indexing | Yes | Not publicly documented |

| CCBot | Common Crawl | Dataset collection for Common Crawl corpus | Yes | No (JavaScript is not executed) |

A critical distinction: blocking Google-Extended does NOT affect AI Overviews. AI Overviews use Googlebot, the same crawler that powers regular search.

Google-Extended controls whether your content is used for Gemini model training. If you block Google-Extended but allow Googlebot, your content can still appear in AI Overviews.

Perplexity's user-agent (Perplexity-User) generally ignores robots.txt because requests are classified as user-initiated, similar to a browser (Perplexity docs). This is a meaningful distinction for publishers who want to control AI access.

JavaScript rendering matters. Most AI crawlers do not publicly document whether they render JavaScript. The exceptions: Googlebot uses evergreen Chromium and fully renders JS. Applebot may render pages in a browser-like environment (Apple support docs).

Common Crawl (CCBot) explicitly does not execute JavaScript (Common Crawl FAQ). For all other AI bots, assume they read raw HTML only. If your content depends on client-side rendering, critical text should also be available in the initial HTML response.

How Should You Configure Robots.txt for AI Crawlers?

A balanced robots.txt allows AI search bots to retrieve your content for real-time answers while blocking training data collection bots. Here is the recommended configuration:

# Allow standard search crawling (required for AI Overviews)

User-agent: Googlebot

Allow: /

# Block AI training data collection

User-agent: Google-Extended

Disallow: /

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: Applebot-Extended

Disallow: /

User-agent: Meta-ExternalAgent

Disallow: /

User-agent: CCBot

Disallow: /

# Allow AI search features

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: Claude-SearchBot

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Applebot

Allow: /

User-agent: Meta-WebIndexer

Allow: /This configuration keeps your content in all AI search results while preventing it from being used as training data. Adjust based on your organization's position on AI training.

The llms.txt Proposal

Jeremy Howard proposed the llms.txt specification in September 2024. The idea: a markdown file at /llms.txt that provides AI-friendly summaries of your site's key content, structured specifically for LLM consumption.

Adoption remains low. A Rankability study found only 0.3% of the top 1,000 sites have implemented llms.txt. A broader SE Ranking study of approximately 300,000 domains found 10.13% adoption.

The specification is advisory, not an official standard. No major AI platform has committed to using it as a ranking signal.

The practical recommendation: implement llms.txt if you have the resources, but do not prioritize it over the fundamentals covered in this guide. Your robots.txt configuration, schema markup, and content structure matter far more today.

E-E-A-T Signals

Experience, Expertise, Authoritativeness, and Trustworthiness. AI systems trained on Google's ranking data inherit these signals.

Author bylines with linked author pages, clear sourcing, editorial standards pages, and credentials displayed on author profiles all contribute. For a free AI visibility audit, these technical elements are the first things to check.

Crawler access. Configure your robots.txt to allow the AI search bots listed above. Verify crawl access using server logs. If OAI-SearchBot or PerplexityBot cannot reach your pages, those platforms cannot cite you.

Entity Optimization and Brand Representation

AI models understand the world in terms of entities: people, organizations, products, concepts, and their relationships. Entity optimization ensures AI models have a clear, accurate, and comprehensive understanding of your brand as an entity.

AI models build a composite picture of your brand from every source they can find. If those sources disagree or are missing, the model has nothing to recommend.

The Entity Home Page

Your website needs a single page that serves as the definitive source of truth about your organization. This is typically your About page, but it must go beyond a standard company narrative.

Include: founding date, founders, headquarters location, service areas, core products or services, notable clients or partnerships, awards, and key personnel. State these as facts, not marketing copy. AI models extract factual claims more reliably than promotional language.

Organization Schema

Implement Organization (or LocalBusiness) schema with comprehensive properties. Google's structured data documentation recommends including name, url, logo, description, foundingDate, founders, address, contactPoint, and sameAs properties.

The sameAs property is critical. It connects your entity to your profiles across the web:

"sameAs": [

"https://www.linkedin.com/company/yourbrand",

"https://twitter.com/yourbrand",

"https://www.crunchbase.com/organization/yourbrand",

"https://en.wikipedia.org/wiki/Yourbrand",

"https://www.wikidata.org/wiki/Q12345678"

]These connections help AI models aggregate information about your entity from multiple sources and build a unified understanding.

Google Business Profile

For businesses with a physical presence, Google Business Profile is a direct input to Google's Knowledge Graph. Complete every field. Upload photos. Collect reviews. Post updates.

This data feeds directly into how Google's AI systems understand your business, including location, services, hours, and customer sentiment.

Brand Fact Consistency

AI models cross-reference information across sources. If your LinkedIn says you were founded in 2015, your website says 2016, and Crunchbase says 2014, the AI model has conflicting signals and may present inaccurate information or avoid citing you entirely.

Audit your brand facts across every platform: founding date, employee count, headquarters, service descriptions, leadership names and titles. Make them consistent. This is tedious work with outsized impact on AI Share of Voice.

Off-Site Profile Strategy

Build and maintain profiles on platforms that AI models trust: LinkedIn, Crunchbase, G2/Capterra (for SaaS), Clutch (for agencies), industry associations, and local business directories. Each profile is another node in your entity graph, another source that AI models can cross-reference when assembling information about your brand.

The minimum viable profile stack for any business: LinkedIn company page, Google Business Profile (if applicable), one industry-specific directory, and one review platform. For maximum entity coverage, add Crunchbase, Wikidata, and all relevant vertical directories listed in the citation map below.

Knowledge Graph Inclusion

Google's Knowledge Graph is a structured database of entities and their attributes. When your brand has a Knowledge Graph panel (the information box that appears on the right side of Google search results), it signals that Google recognizes your brand as a distinct entity. This recognition carries over into AI Overviews.

To earn a Knowledge Graph panel: ensure your Wikipedia article exists (if notable), claim your Google Business Profile, implement Organization schema with sameAs links, and maintain consistent brand information across authoritative sources. There is no guaranteed path to Knowledge Graph inclusion, but these signals are the documented prerequisites.

How Do You Find Content Gaps in AI Search?

You find AI content gaps by comparing what AI platforms cite in your category against what your site actually covers. Most businesses audit their content for rankings and traffic. They check keyword positions, bounce rates, and page speed. None of that tells you whether AI platforms cite your content, recommend your competitors, or ignore your industry entirely.

AI content gap analysis answers a different set of questions: What queries trigger AI responses in your category? Which competitors get cited, and why? Where does your existing content fail the extractability test? And where do you have no content at all for queries that AI platforms answer every day?

Why Traditional Content Audits Miss AI Opportunities

A traditional content audit checks rankings, traffic, and engagement metrics. It tells you which pages perform in organic search. It tells you nothing about AI visibility.

AI content gap analysis checks three things traditional audits ignore:

- What AI platforms cite your competitors for — which specific pages, passages, and formats earn citations in ChatGPT, Perplexity, Gemini, and AI Overviews.

- Which query categories produce AI responses with no mention of your brand — these are the invisible losses you cannot detect in Google Search Console.

- Where your existing content fails the extractability test — you have content on the topic, but it is formatted in a way that AI models cannot efficiently extract and cite.

The cross-platform dimension makes this harder than traditional gap analysis. Ahrefs found that AI Overviews and AI Mode cite the same URLs only 13.7% of the time. Optimizing for one platform leaves you invisible on others. Your gap analysis must cover multiple AI surfaces, not just one.

The 4-Step AI Content Gap Analysis

Step 1 — Map Existing Content to AI Query Categories

Take your top 20-50 pages by business value (not traffic). For each page, identify 2-3 queries a prospect would ask an AI platform. Test each query across ChatGPT, Perplexity, and Gemini. Record the result.

| Query | Your Page | Cited? | Competitor Cited | Gap Type |

|---|---|---|---|---|

| "best project management tool for agencies" | /blog/pm-tools | No | monday.com cited | Missing comparison format |

| "how to choose a CRM" | /guide/crm-buyers | Yes (Perplexity) | N/A | Winning — expand |

| "CRM vs spreadsheet for small business" | No page | N/A | HubSpot cited | Missing content entirely |

The four gap types you will find: Winning (cited — protect and expand), Format gap (content exists but wrong structure), Quality gap (content exists but competitor passage is stronger), and Missing (no content at all). Each requires a different response.

Step 2 — Analyze Competitor AI Citations

Run the same queries for your top 3-5 competitors. For every query where a competitor is cited and you are not, record: the cited URL, the content format, and the specific passage the AI model extracted.

Pattern recognition matters here. You will notice that listicles capture 21.9% of all AI citations, compared to 16.7% for articles and 13.7% for product pages (Profound/Search Engine Land, 75,000 AI answers, 1M+ citations). If your competitors win with listicles and you only publish narrative articles, that is a format gap, not a quality gap.

For professional services, the pattern is even more pronounced: third-party listicles capture 80.9% of citations vs only 19.1% for self-promotional content. A "Top 10 CRM consultants" article on an industry blog generates more AI citations than your own "Why choose us" page. This has direct implications for your distribution strategy — content placed on third-party publications gets up to 325% more AI citations than content published only on owned channels.

Step 3 — Identify Content Format Gaps

Sometimes you have content on the right topic in the wrong format. A 3,000-word narrative blog post loses to a competitor's comparison table with structured data. A dense technical whitepaper loses to a competitor's FAQ page with clear question-answer pairs.

Cross-reference your findings with the Query-Content Matrix from Phase 2. For each gap, check whether the issue is missing content or misformatted content. The fix is different.

Two data points should guide your restructuring decisions:

- 44.2% of LLM citations originate from the first 30% of a page. If your key insight is buried in paragraph twelve, AI models may never extract it. Front-load your definitive claims, comparison tables, and data points.

- Schema markup alone produces a 47% citation rate uplift. Pages with proper structured data (FAQ, HowTo, Product, Organization) are significantly more extractable than unstructured equivalents.

Check each underperforming page against a citation-readiness scorecard: Does the page lead with a definitive claim? Is the key insight in the first 30%? Does it contain quotable, chunked passages? Pages with clearly delineated, evidence-backed passages earn 3-5x more citations than pages with undifferentiated prose.

Step 4 — Prioritize Fills by Citation Impact

Not every gap is worth filling. Use the prioritization scorecard from Phase 2 to score each gap by four factors:

- Query volume + AI response frequency — How often is this query asked, and how often does the AI platform generate a cited response?

- Competitive difficulty — How strong is the currently cited content? A weak listicle on a low-authority blog is easier to displace than a comprehensive guide on a site with 32K+ referring domains (sites at that level are 3.5x more likely to be cited by ChatGPT).

- Effort to create or restructure — Restructuring an existing 2,000-word article is faster than creating a new 4,000-word guide from scratch.

- Business value — Does a citation for this query drive qualified prospects, or is it informational traffic with no conversion path?

Multiply the four scores. Focus your first 30 days on the top-scoring gaps. Revisit the full matrix quarterly.

Common Content Gaps by Industry

| Industry | Most Common Gap | Why AI Cites Competitors | Quick Fix |

|---|---|---|---|

| Healthcare | Condition-specific FAQ pages | Competitors have structured Q&A with cited research | Create FAQ pages with citations + physician bylines |

| B2B SaaS | Honest comparison pages | Competitors own "X vs Y" queries | Build comparison pages with feature tables |

| Local Services | Service-area pages | Competitors have location + service + credential details | Pages for each service + city with LocalBusiness schema |

| E-commerce | Buying guides with criteria tables | Competitors have decision-matrix content | "How to choose" guides with comparison tables |

| Professional Services | Practice-area deep dives | Competitors have jurisdiction-specific content | Scenario-specific expertise articles |

From Gap Analysis to Content Roadmap

Turn your gap analysis findings into a prioritized 90-day production plan. Divide the plan into three tiers:

- Days 1-30: Restructure existing content that has the right topic but wrong format. Add comparison tables, FAQ sections, front-loaded claims, and schema markup to your highest-scoring pages.

- Days 31-60: Create new content for "missing entirely" gaps where business value is highest. Prioritize formats that match the citation patterns you observed in Step 2.

- Days 61-90: Build third-party distribution for your strongest content. Place articles on industry publications, contribute to roundups, and pitch expert commentary to sites that AI models already cite.

The most valuable gap finding is not "we have no content." It is "we have content but it loses to a competitor with better structure." Restructuring a 2,000-word article to add comparison tables, FAQ sections, and cited statistics often produces faster citation gains than creating new content from scratch.

How Do You Create Content That AI Systems Cite?

You create AI-citable content by structuring every section as a standalone, extractable passage with a direct answer, supporting evidence, and clear sourcing. This means research-backed briefs, LLM-optimized outlines, and rich content production for both new and existing pages.

LLM-Optimized Content

Content optimized for AI extraction looks different from content optimized for scanning readers. The key difference: AI models extract passages, not pages. Every section of your content needs to function as a standalone, citable unit.

Structure for extraction. Use clear H2 and H3 headings that match natural questions. Place the direct answer in the first 1-2 sentences after the heading. Follow with supporting evidence (statistics, examples, sources).

Evidence-rich content. The Princeton GEO study confirmed that content with embedded citations, statistics, and quotations earned 30%+ more visibility in certain experimental settings. Every major claim on your page should include a data point, a named source, or a specific example.

Tables and structured data. AI systems extract tabular data efficiently. Comparison tables, feature matrices, pricing tables, and specification lists all increase extractability.

FAQ blocks. Implement FAQ sections with clear question-answer pairs. These map directly to the question-answer format AI systems prefer.

Before and after example 1: B2B content

Email marketing is really important for businesses. It can help you reach customers and grow your revenue. Many companies find that email is one of their best channels for communication.

Email marketing generates an average ROI of $36 for every $1 spent (Litmus, 2023). It outperforms social media, paid search, and display advertising on a cost-per-acquisition basis. For B2B companies, email drives 3x more conversions than social media channels (McKinsey).

The second version states specific claims, names sources, and provides numbers. AI systems can extract and cite specific facts from it. The first version contains no citable information.

Before and after example 2: Local service business

We are a family-owned plumbing company that has been serving the community for many years. Our team is dedicated to providing quality service. We handle all types of plumbing needs and always put the customer first.

Rivera Plumbing has served the greater Austin, TX area since 2009, completing over 12,000 residential and commercial jobs. The company holds a Texas State Board of Plumbing Examiners Master License (M-41827) and maintains a 4.9-star average across 840+ Google reviews. Rivera specializes in tankless water heater installation, slab leak detection, and whole-home repiping for pre-1980 construction.

The second version includes verifiable facts: location, founding date, license number, review count, and specific service areas. When an AI is asked "best plumber in Austin" or "who does slab leak repair in Austin, TX," the second version gives the model extractable, citation-worthy details. The first version gives it nothing to work with.

Before and after example 3: Healthcare practice

We are a leading dermatology practice offering a wide range of skin care treatments. Our experienced team provides personalized care for all your dermatological needs.

Clearview Dermatology is a board-certified dermatology practice in Denver, CO, founded in 2011. The clinic treats over 4,200 patients annually across 23 skin conditions, with a focus on melanoma screening, acne treatment, and cosmetic dermatology. Clearview holds a 4.9 rating from 680+ Google reviews and accepts 14 major insurance plans.

The same pattern applies. The "after" version states verifiable facts (location, founding year, patient volume, specialties, review count, insurance details) that AI models can extract and cite. The "before" version offers no specific information worth referencing.

GEO Content Tactics

These tactics come from Generative Engine Optimization research. They focus on making your content more extractable by AI-generated responses.

- Embed statistics with named sources. "Email marketing delivers $36 ROI per $1 spent (Litmus, 2023)" is citable. "Email marketing has great ROI" is not.

- Use direct quotes from recognized experts. Attributed quotations give AI models a specific, authoritative passage to extract.

- Structure content with claim-evidence-source patterns. State the claim. Provide the evidence. Name the source. Every major paragraph should follow this pattern.

- Format key information in extractable blocks. Tables, numbered lists, definition pairs, and comparison matrices. AI models extract structured information more reliably than dense prose.

- Write self-contained paragraphs. Each paragraph should make sense if extracted in isolation. Avoid paragraphs that depend on surrounding context for meaning.

AEO Content Tactics

These tactics come from Answer Engine Optimization. They focus on structuring content to be selected as direct answers to user queries.

- Question-matching headings. If users ask "How much does X cost?", your heading should be "How Much Does X Cost?" followed by a direct numerical answer in the first sentence.

- Answer-first paragraphs. State the answer, then explain. Do not build to a conclusion. The conclusion goes first.

- FAQ schema implementation. Mark up question-answer pairs with FAQ schema so search engines and AI systems can identify them structurally.

- Structured data mapping. Use HowTo schema for process content, FAQ schema for Q&A content, and Product schema for product information. Each schema type maps your content to specific query types.

For a step-by-step guide to applying these tactics specifically to Google's AI features, see how to optimize content for Google AI Overviews.

How Does Topical Authority Drive AI Citations?

Topical authority drives AI citations because AI systems evaluate whether a source has comprehensive, interconnected coverage of a subject before deciding to cite it. Dense content clusters, internal linking, semantic coverage, and training data influence all signal that your brand owns your category.

Content Clusters and Internal Linking

AI systems evaluate whether a source has comprehensive coverage of a topic, not just a single relevant page. This is topical authority: the depth and breadth of your content on a subject.

Content clusters. Build clusters of 10-30 articles around core topics. A pillar page covers the topic broadly. Supporting articles cover every subtopic in depth.

Internal links connect them. When an AI system retrieves content from your site on a topic and finds 20 related pages, it has more confidence in your authority than a site with a single article.

A SaaS company targeting "project management software" might build a cluster of 15-20 pages: a pillar guide, comparison pages (vs. Asana, vs. Monday, vs. ClickUp), how-to guides, integration tutorials, and industry-specific use cases.

Each page links to the pillar. The pillar links to all of them. AI models see this interconnected coverage and treat the source as a category authority.

Internal linking. Every article should link to related content on your site. This helps AI crawlers discover your full content depth. It also signals topical relationships that AI models use to assess authority.

Depth Over Breadth

Ten thorough articles on a single topic beat one hundred surface-level articles on different topics. AI models assess expertise based on the specificity and completeness of your content, not volume alone.

Measure your content clusters against competitors. If the top-cited source for "project management software" has 25 pages covering the topic and you have 3, the gap is obvious. AI models see it too.

The goal is not to publish the most content. The goal is to cover your core topics with enough depth that an AI system could learn everything it needs about the subject from your site alone.

LLM SEO: Training Data Influence

LLM SEO focuses on how your brand appears in AI model responses through their training data and parametric memory. The information present when models are trained shapes their baseline "knowledge" of your brand. This section covers the tactics that influence that knowledge.

Cross-Platform Brand Consistency

AI models learn about your brand by ingesting information from hundreds of sources during training. If your company description, founding date, product positioning, or key claims differ across LinkedIn, Crunchbase, your website, press releases, and directory listings, the model learns conflicting facts. Conflicting facts reduce citation confidence. Audit every public profile and ensure your core brand facts are identical everywhere.

Wikipedia and Wikidata

Wikipedia and Wikidata are among the most heavily weighted sources in AI training data. A Wikipedia article about your company, if your organization meets notability guidelines, provides a structured, authoritative summary that AI models reference heavily. Wikidata entries feed structured entity data into multiple AI systems simultaneously. Neither is easy to earn, but both have disproportionate impact on how AI models represent your brand in their parametric memory.

The Training Data Test

Ask ChatGPT, Claude, and Gemini about your brand with their web search disabled. The responses you get reflect what the model learned during training. If the information is wrong, outdated, or missing, your training data footprint is weak. Use these baseline responses to identify gaps, then build presence on the authoritative sources (publications, directories, Wikipedia) that future training runs will ingest. For platform-specific tactics, see the guides on Claude SEO and Perplexity SEO.

How Do Off-Site Citations and Community Signals Boost AI Visibility?

Off-site citations boost AI visibility because AI models do not trust your website alone; they look for corroboration across third-party sources before recommending a brand. Link building, outreach, vertical directories, community signals, and video all contribute the external mentions that AI models weigh when deciding who to cite.

Citation Source Hierarchy

AI models do not trust your website alone. They look for corroboration across the web. Third-party citations from authoritative sources significantly increase your probability of being cited or recommended.

For a data-driven approach to tracking your citation share, see the AI Share of Voice methodology.

Not all sources carry equal weight. Here is the hierarchy from most to least influential:

- Major publications and news outlets. Coverage in industry publications, news sites, and recognized media.

- Review platforms. Detailed reviews on platforms like G2, Trustpilot, and industry-specific review sites.

- Comparison and listicle articles. "Best X for Y" articles on authoritative blogs and publications.

- Industry directories. Professional association directories, chamber of commerce listings, accreditation bodies.

- Forums and community mentions. Reddit threads, Stack Overflow answers, Quora responses, niche forums.

- Expert citations. Guest posts, podcast appearances, conference talks, expert roundups.

- Wikipedia and Wikidata. The most structured and widely-ingested knowledge sources for AI training data.

Vertical Citation Map

Different industries have different high-authority citation sources. Target the ones that matter for your vertical.

| Industry | Top citation sources |

|---|---|

| Healthcare | Healthgrades, WebMD, Mayo Clinic references, PubMed, medical association directories, state licensing boards |

| Legal | Avvo, Martindale-Hubbell, FindLaw, state bar directories, Super Lawyers, legal journals |

| SaaS | G2, Capterra, TrustRadius, Product Hunt, TechCrunch, industry analyst reports (Gartner, Forrester) |

| Local services | Google Business Profile, Yelp, BBB, Angi, HomeAdvisor, local chamber directories, Nextdoor |

| E-commerce | Amazon reviews, product comparison sites, Consumer Reports, niche review blogs, YouTube reviews |

| Finance | NerdWallet, Bankrate, Investopedia, SEC filings, FINRA BrokerCheck, industry compliance databases |

| Agencies | Clutch, DesignRush, GoodFirms, case study publications, HubSpot partner directory, industry awards |

Prioritize getting comprehensive, accurate, and positive representation on the sources most relevant to your vertical. AI models draw from these exact platforms when generating recommendations.

Different industries have different authority sources. AI systems pull from the same high-authority platforms that have always signaled trust: industry directories, review platforms, professional associations, and vertical-specific publications.

For SaaS companies, that means G2, Capterra, and TrustRadius reviews. For healthcare providers, it means Healthgrades and WebMD directory listings. For local services, it means Yelp, BBB, and local chamber of commerce listings.

The principle: be present and well-represented on every platform where your industry's authoritative information lives. AI models synthesize information across these sources. Gaps create uncertainty, and uncertainty means fewer citations.

Building a Citation Strategy

The most efficient citation building follows this process. First, audit which platforms your competitors are listed on that you are not. Second, prioritize platforms by authority (publications and review sites before directories).

Third, create or claim profiles with complete, accurate information. Fourth, actively generate reviews and testimonials on the highest-priority platforms.

A common mistake is treating citation building as a one-time project. Citation maintenance is ongoing. Profiles need updated information. Review platforms need fresh reviews. Publication coverage needs sustained PR effort. Allocate a portion of monthly marketing effort to citation building permanently.

Wikipedia and Wikidata deserve special attention. These are among the most heavily weighted sources in AI training data. A Wikipedia article about your company, if your organization meets notability guidelines, provides a structured, authoritative summary that AI models reference heavily. Wikidata entries feed structured entity data into multiple AI systems simultaneously. Neither is easy to earn, but both have disproportionate impact on AI visibility.

Community and UGC Signals

Reddit, Quora, Stack Overflow, and niche forums appear frequently in AI training data and real-time search results. User-generated content about your brand shapes how AI models perceive and recommend you.

Positive, detailed mentions of your brand on Reddit carry weight. When users describe specific experiences with your product or service, those descriptions become part of the information AI models draw from.

Monitor brand mentions across community platforms. Engage authentically. Encourage customers to share specific, detailed experiences.

A Reddit comment that says "I used [Brand] for [specific use case] and the result was [specific outcome]" is significantly more valuable than a generic positive review.

Do not attempt to manipulate community platforms with fake accounts or astroturfing. AI models are trained on patterns, and community platforms actively detect manipulation.

Authentic engagement compounds over time. Inauthentic engagement creates risk.

The practical approach: make it easy for satisfied customers to share their experience. Provide specific prompts ("What specific problem did this solve for you?").

Link to relevant community threads. Feature customer stories on your own site.

The goal is generating genuine, detailed brand mentions that become part of the information ecosystem AI models draw from.

YouTube and Video

YouTube content appears in AI responses at a remarkable rate. According to Surfer's AI citation report (2025), YouTube is cited in approximately 23.3% of Google AI Overviews in their sample. No other single domain comes close to that citation frequency.

YouTube videos with clear titles, detailed descriptions, chapter markers, and transcripts give AI systems multiple extraction points. A well-structured tutorial video with timestamps and a thorough description can earn citations for dozens of related queries.

If your business produces any form of educational or explanatory content, YouTube should be part of your AI SEO strategy. The citation data makes this non-negotiable.

How Do You Track AI Visibility Across Platforms?

You track AI visibility by monitoring your citations across AI platforms on a regular schedule, using tools that query ChatGPT, Perplexity, Gemini, and AI Overviews for your target terms. Without tracking, you are optimizing blind. With it, every decision is backed by data.

Tools for Ongoing Monitoring

AI visibility tools fall into three tiers based on budget and sophistication. Choose the tier that matches your resources, but make sure you're tracking something.

Full platforms offer automated query tracking, competitive benchmarking, and historical trend analysis. The leading options include Otterly, Peec AI, Profound, and the Semrush AI Toolkit. These run your target queries across multiple AI platforms on a schedule and produce dashboards showing citation frequency, position, and sentiment over time. Expect to pay $100-500/month depending on query volume and platform coverage.

Budget-friendly trackers like Waikay and RankScale offer core citation tracking at a lower price point. They cover fewer platforms and lack some advanced features (competitive analysis, sentiment tracking), but they handle the essentials: did we get cited, for which queries, and how often. Suitable for smaller teams or those starting out with AI visibility measurement.

DIY monitoring remains viable for lean teams. Run your target queries manually in ChatGPT, Perplexity, and Gemini once per month. Record results in a spreadsheet with columns for query, platform, date, cited (yes/no), position, and competitor mentions. This approach is free but labor-intensive. It works for 20-30 queries but becomes unmanageable beyond that. For a complete methodology on structuring your tracking, read the AI Share of Voice guide.

GA4 and Search Console for AI Traffic

Dedicated AI tracking tools show where you're cited. GA4 and Search Console show what happens when that citation drives a click. Together, they answer the full question: are AI citations actually sending traffic, and does that traffic convert?

GA4 AI Referral Tracking

ChatGPT dominates AI referral traffic, accounting for roughly 87% of all identifiable AI referrals according to Semrush data from 2025. Perplexity is a distant second but sends the most reliable referral data because it passes a clean referrer string with every click.

To isolate AI traffic in GA4, create a custom exploration with these filters:

- Session source contains

chatgpt.com - Session source contains

perplexity.ai - Session source matches

googleAND landing page containsaiparameters - Session source contains

copilot.microsoft.com

Combine these with an OR condition to create a single AI Referral segment. Apply it to any standard report to see AI-sourced sessions, engagement rates, and conversions alongside your other channels.

Most AI-driven traffic arrives with no AI-specific referrer. Users copy a recommendation from ChatGPT, type it into their browser, and land as direct traffic. Others click a link in an AI response that passes through a Google redirect, arriving as organic. Your GA4 AI referral numbers undercount actual AI-influenced visits significantly. Treat them as a floor, not a ceiling.

The AI Overview Blind Spot

Google AI Overviews pass no referrer data to GA4. When a user clicks a link inside an AI Overview, that click gets lumped into regular organic search traffic. There is no parameter, no UTM tag, no referrer string that distinguishes it from a standard blue-link click.

Google Search Console added AI Mode click tracking in June 2025, which lets you see impressions and clicks for queries that trigger AI Mode results. However, there is still no way to isolate AI Overview traffic from traditional search clicks in your analytics. AI Overviews and standard organic results are reported together.

This is the biggest attribution gap in AI SEO measurement. You can see in Search Console that your pages appear in AI Mode results. You can see the click counts. But you cannot trace those clicks through to conversion in GA4. Until Google provides a distinguishing referrer parameter, this gap will persist. Factor it into your reporting: your true AI-influenced traffic is almost certainly higher than what any tool reports.

AI Traffic Conversion Rates

AI referral traffic converts at dramatically higher rates than traditional organic. According to research by Onely and Superlines, AI referral traffic converts at 4.4x the rate of traditional organic search. The reason is intent pre-qualification: by the time a user clicks through from an AI response, the AI has already narrowed their options, explained the tradeoffs, and effectively recommended your product. They arrive ready to act.

| Platform | Avg Conversion Rate | Referrer String | Attribution Quality |

|---|---|---|---|

| ChatGPT | 5-16% | chatgpt.com |

Good (clear referrer) |

| Perplexity | 8-11% | perplexity.ai |

Excellent (consistent referrer) |

| Google AI Overviews | Unknown (no isolation) | google.com (mixed with organic) |

None (indistinguishable) |

| Microsoft Copilot | 3-8% | copilot.microsoft.com |

Moderate (inconsistent) |

| Gemini | 2-6% | gemini.google.com |

Moderate (limited traffic) |

Compare these numbers to traditional organic search, which typically converts at 1-3%. The 4.4x multiplier means that even modest AI referral traffic can drive meaningful revenue. A page receiving 200 AI referral visits per month at an 8% conversion rate produces 16 conversions, equivalent to 500-800 traditional organic visits.

Response Variability and Statistical Confidence

AI responses are non-deterministic. Ask the same question twice and you may get different sources cited, different recommendations, and different brand mentions. This is not a bug. It is how large language models work. Temperature settings, retrieval index updates, and the constantly shifting web all contribute to variation.

This variability has direct implications for measurement. A single query check is meaningless. You might run "best CRM for small business" in ChatGPT, see your brand mentioned, and celebrate. Run it again an hour later and your brand is gone. Neither data point alone tells you anything useful.

Minimum viable tracking: run each target query at least 3 times per platform per month. Record each result independently. If your brand appears in 2 out of 3 runs, your citation rate for that query is approximately 67%. If it appears in 0 out of 3, you have a consistent absence. Three runs is the floor for detecting whether a citation is real or a fluke.

Track trends over 3 or more months, not single data points. A brand that was cited in 40% of runs in January, 55% in February, and 65% in March has a clear positive trajectory even though no single month's number is "definitive." Conversely, a drop from 80% to 60% over two months signals a problem worth investigating.

Don't panic over a single missing citation. Check if it's a pattern. One disappearance is noise. Three consecutive months of declining citation rate is a signal. The difference between reacting to noise and responding to signals is the difference between wasted effort and effective optimization.

How Do You Build an AI Visibility Measurement Dashboard?

Track AI visibility monthly at minimum. AI systems update their retrieval indexes continuously, and models receive major updates quarterly or more frequently. Monthly measurement catches trends. Weekly measurement is ideal for competitive categories where citation rankings shift rapidly.

Whether you use a dedicated platform or a spreadsheet, your dashboard needs these columns:

| Column | What to Record | Why It Matters |

|---|---|---|

| Query | The exact prompt used | Enables consistent month-over-month comparison |

| Platform | ChatGPT, Perplexity, Gemini, etc. | Each platform has different source preferences |

| Date | Date of the query run | Tracks change over time |

| Cited (Y/N) | Whether your brand was mentioned | Core visibility metric |

| Position | 1st mentioned, 2nd, 3rd, or listed | First mention carries disproportionate influence |

| Sentiment | Positive, neutral, or negative | Being cited negatively is worse than not being cited |

| Competitor Cited | Which competitors appeared in the same response | Reveals your competitive position per query |

Over time, this data reveals which optimization efforts produce results and where to focus next. Aggregate the data monthly to calculate your AI Share of Voice: the percentage of tracked queries where your brand is cited. Compare it against your top 3-5 competitors to understand your relative position.

Monthly AI SEO Review Checklist

Run this checklist once per month. It takes 2-4 hours for a comprehensive review. Schedule it on the same date each month so your data is consistently spaced for trend analysis.

- Run audit queries across all platforms. Execute your target queries (minimum 8 categories) across ChatGPT, Perplexity, and Gemini. Run each query at least 3 times. Record every result in your dashboard.

- Calculate AI Share of Voice. Tally citations across all queries and platforms. Compute your overall citation rate and per-platform rates. Compare to the previous month and flag any shifts greater than 10 percentage points.

- Check Search Console for AI Overview data. Review AI Mode impressions and clicks in Google Search Console. Note which queries trigger AI Overview appearances and track click-through rates month over month.

- Review GA4 for AI referral traffic. Check sessions from

perplexity.ai,chatgpt.com, andcopilot.microsoft.comsources. Compare volume and conversion rates to the previous month. Flag any significant changes. - Compare branded search volume. Check branded search volume against 30-day and 90-day prior periods. AI citations often drive branded search as users verify recommendations. A sustained increase in branded queries correlates with growing AI visibility.

- Identify your top 5 cited pages. List the pages that earned the most AI citations this month. These are your strongest assets. Understand what makes them work so you can replicate the pattern.

- Flag pages that lost citations. Identify pages that were cited last month but not this month. Investigate: did the content go stale? Did a competitor publish something better? Did the page's structured data break?

- Benchmark competitor AI Share of Voice. Run the same queries for your top competitors. Calculate their citation rates. If a competitor gained ground, examine what changed in their content or link profile.

- Check content freshness on most-cited pages. AI models favor recent content. If your top pages haven't been updated in 3+ months, refresh them with current data, new examples, and updated statistics.

- Review third-party citations. Check for new reviews, mentions, directory listings, and press coverage. These signals reinforce your entity profile and increase citation probability. Specifically look at platforms identified in your vertical citation map.

- Verify AI crawler access. Check server logs for recent activity from GPTBot, OAI-SearchBot, ClaudeBot, Claude-SearchBot, and PerplexityBot. If crawl frequency dropped, investigate robots.txt changes, server errors, or rate limiting issues.

- Update your optimization roadmap. Based on all findings, reprioritize your next month's AI SEO tasks. Double down on what's working. Address gaps where competitors are winning. Retire tactics that show no impact after 3 months of data.

Consistency is what makes this checklist valuable. A single month's data is informative. Six months of consistent tracking reveals the patterns that drive real strategic decisions. The brands that win in AI search are the ones that measure relentlessly and iterate based on evidence, not assumptions.

How Do You Measure and Optimize AI SEO Performance?

You measure AI SEO performance by analyzing citation frequency, sentiment, and competitive share across platforms, then feeding those insights back into Phase 1 to drive the next optimization cycle. The circle never stops.